From Blackboards to Black Vestments: Where Do We Put Our Trust?

Experts' "blah blah" words, the inevitability narrative, and digital theophanies

For those of you who prefer to read off paper rather than the screen, I have converted the post into an easily printable pdf file. Remember to come back and share your thoughts and comments! You can access the file here:

From Blackboards to Black Vestments: Where Do We Put Our Trust

“Neither reason nor faith will ever die; for men would die if deprived of either.”

- G.K. Chesterton

It’s a cold midweek evening in January, and a friend is giving us a private tour of the Perimeter Institute for Theoretical Physics. From the outside the building looks like a space station, with black aluminum walls and slanted golden windows, and from the inside its flowing angular spaces of concrete and glass give it the feel of S.T.A.R. Labs of DC Comics. You feel you might just run into The Flash, although more likely it would be the ghost of Stephen Hawking, who had an office here for a time.

This is a place of big ideas. There are chalkboards everywhere1. Hundreds of physicists and students work at the Institute and go through over 6000 pieces of chalk each year, scratching out equations on cosmology and quantum reality and everything in between. The equations are everywhere, in every glass-walled office and seminar room and even in the bistros, the numbers and symbols scrawled out in white dust like alien languages. Being here makes you want to be a mathematician. Makes you want to ponder big ideas.

Tonight, in the Institute’s auditorium, a panel of experts has gathered to discuss one of those big ideas: Artificial Intelligence: Should it Be Trusted? It begins with an inspirational video filled with images of great explorers, from the scientific to the aesthetic, from Albert Einstein to David Bowie, set to inspirational music that stirs a sense of impending wonder. As the video draws to a close, a bearded assistant raises his hands and begins to clap, which is the pre-arranged cue for the audience to start applauding as the panelists take the stage.

There’s an industry rep, two professors, and a NASA researcher, while the moderator is an engineering director from Google. With so much brain power, we’re hoping to see ideas clash. Whether we should trust AI has been on a lot of people’s minds since Chat GPT went viral in early 2023. The man from NASA points out it took humans 300,000 years to master language, but it took AI about a year2. That’s a bit unsettling. What’s coming next?

Everyone on the panel seems to agree that we need to see AI in a balanced way. As the man from NASA puts it, AI isn’t just an “autocomplete engine”, but it doesn’t have “anthropomorphic agency” either. So no, your smart toaster oven probably isn’t going to take over the world.

That gets a chuckle. Even our 11-year-old son laughs. But for the most part the conversation on stage is abstract. There are only so many times you can hear blah-blah words like data privacy, regulation, policy, innovation before your head gets fuzzy. Which isn’t to say these things aren’t relevant. But the longer the panelists talk, the more we notice something missing from the discourse. We might call it the quantum elephant in the room, glaringly obvious, yet strangely invisible.

The pre-AI wave of digital tech—internet, smartphones, social media, virtual reality—have given us new conveniences and capabilities, but arguably at the cost of worsening our concentration and mental health, fueling social division, and manipulating us for profit and political gain. Compared to all this, AI’s impact could be exponentially greater. Why aren’t the panelists talking about this?

And there’s one more elephant to which the panelists seem oblivious, though it’s charging across the stage and beating its hooves. Digital tech and now AI aren’t just raising questions about data privacy or whether our smart toaster oven is scheming behind our back. They are doing something more fundamental: changing where we place our deepest trust.

And the survey says…

Our ancient ancestors beheld gods in lightning. Our modern ancestors proved lightning is a kind of electricity, and learned how to run it through a wire, and we of the machine age have given that electricity intelligence and make it fly around the world, transmitting thoughts and wonders through the internet, devices, and AI.

We got this far by trusting in technology, although our trust is now being challenged like never before. Which brings us to the results of our poll “Machine Peril or Machine Promise” from last week.

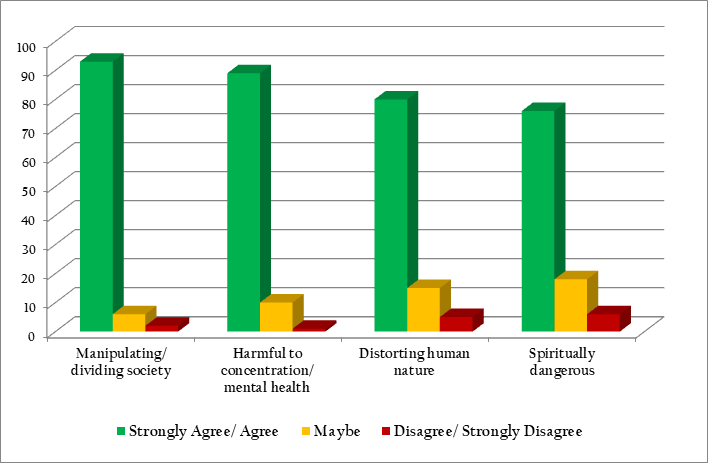

Over 800 readers participated in the poll, whose results give a snapshot of reader concerns about digital technologies. Here’s what we found:

The Biggest Concern: 93% agreed or strongly agreed that digital tech is manipulating and dividing society.

The Second Biggest Concern: Close behind were concerns about concentration and mental health, with 89% agreeing or strongly agreeing with this concern.

Human Nature and Spirit: A smaller, but still sizeable percentage, of respondents agreed or strongly agreed that digital tech was distorting our human nature (80%) or is spiritually dangerous (76%).

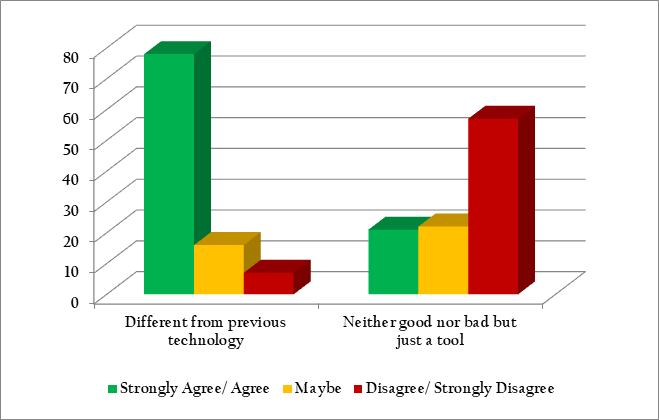

Digital Tech is Different: A strong majority of respondents (78%) agreed or strongly agreed that digital tech is different from previous technologies like TV or the printing press. Only a minority agreed or strongly agreed that digital tech is neither good nor bad, but just a tool (21%) while a majority disagreed/strongly disagreed (57%). Overall, these poll results suggest that our readers view digital tech as qualitatively and perhaps morally different from other earlier technologies and tools.

Feelings of Ambivalence: Despite respondents’ worries about tech, not all attitudes were strongly negative. There was also some ambivalence. In response to the idea that digital tech provides us with healthy and positive experiences, 30% agreed or strongly agreed, 25% disagreed or strongly disagreed, while most people weren’t sure (46%). When asked whether digital tech is an overall net benefit to society, a majority disagreed or strongly disagreed (47%), while many were unsure (39%), and only a minority agreed or strongly agreed (14%).

Transhumanism: Two questions in our poll tapped into transhumanism, or the belief that people might one day transcend human nature through technology. A majority of our readers had generally negative attitudes toward transhumanism. 71% percent of respondents disagreed or strongly disagreed with the idea that digital technologies will help human beings evolve as a species, while only 10% percent agreed or strongly agreed. When it came to whether technology might one day improve life by giving us superhuman mental abilities, a majority again either disagreed or strongly disagreed (86%), and only a minority agreed or strongly agreed (3%).

That sense of alienation

Our poll results are only a sampling of our own readership. What would the poll results look like for the general population? We don’t know. But for those readers who might have wondered “Am I alone in my concerns about technology”, our findings given a resounding answer: Take heart, there are many others like you.

We also know that if our readers had been at the trust-in-AI talk that night, some, like us, would have wanted to stand up and shout, “Why aren’t you people digging into the real issues, like how AI might distort human nature, or further divide society, or impact our mental or spiritual health?”

We were curious what our three children (aged 11-18) made of the talk. Even though the question of whether we should “trust” AI will be of greatest relevance to the current generation, they were surprisingly among the very few under 20’s in the crowd. As we had only been able to obtain four tickets3 , Ruth decided to watch from a live feed in an overflow room upstairs, as we felt that hearing these issues discussed (by people other than their parents) was an important opportunity for our kids. An added advantage was that Ruth could settle into a comfortable lounge chair at the back of the room and produce several inches of a shawl she had been working on while listening to the lecture.

When the talk ended, everybody retreated into a foyer for chicken shish kebab, drinks, and informal conversation. In this more relaxed setting, we expected to find a bit more understanding for our concerns.

Before venturing to pose our questions to the presenters, we asked our kids what their impressions of the talk were. Our fifteen-year-old seemed to have captured the essence of it: “It felt like they were saying lots of fluent, abstract words that did not actually hold any meaning.”

A Nobel laureate was among the crowd, and Ruth made a beeline in that direction, figuring that the laureate would surely understand the deeper issues. Ruth started out by asking, “Do you have any concern that AI will lead to a loss of motivation to learn anything?”, “Languages are part of what make us human; learning to use language well changes our perceptions, our heart, and how we relate to each other. What happens to us when we abdicate our language?”, and “Do you think that AI technology is fundamentally different from the technologies that have come before?”

The laureate shrugged off our worries about digital technologies wholesale, feeling they were no different from TV and the printing press. People would adapt, we were assured. It was all simply another step in the process of technological development. The questions seemed to be received as if they were indeed a bit silly or irrelevant.

Other experts we chatted with struggled to initially understand even what we were talking about—and once they did, didn’t have much to say. What was going on here?

The title of the evening’s talk was Artificial Intelligence: Should it be Trusted?, yet we came away feeling it should have been, Should We Trust the Experts Who Ask if AI can be Trusted?4

relates how his grandfather Marshall McLuhan attended the Bilderberg Group in 1969, a meeting with people “who were supposed to control the world”, and had a similar experience:“Frankly, I was staggered at the very low level of awareness of the contemporary world exhibited by all the guests present… Ordinary people live thirty years back in a state of motivated somnambulism.”

When people don’t understand your concerns, you feel alienated, disconnected, even a bit confused. You start to wonder, Am I the crazy one?

Meanwhile, another part of you, the sensible part, starts to lose faith in the experts who are supposed to be guiding us. The panel that night included a speaker from NASA, an industry rep, and two people from academia, but it should have also included, maybe, a plumber, a farmer, a nurse, a mother, a priest, an elderly person, a teacher, a writer, or an artist. People who specialize in real people or real things. People who work with hands or hearts.

The alienation we sensed that night is part of the growing alienation that many are beginning to feel. Tech commentator

senses it too, and makes this prediction, which is really an elaboration of Wendell Berry’s machine versus creature distinction:Across the coming years conflict between those who want to [be] all-knowing, immortal, and infinite machines and those who want to remain creatures will only grow more acute. Both sides have legitimate, but radically different, views of what it means to be a fully realised human being. We are going to need new ways to manage this conflict. And when human-machine fusion is made real, then this conflict will be nothing less than the central political question. How do these two groups — let’s call them People of the Machine and People of the Earth — live well together?

Right now, we have no answers to this question…Instead, we are caught between two sets of stories.

Despite their specific concerns about tech, the people we encountered at the AI discussion seemed largely unconcerned about tech’s impact on human beings as a species or spiritually. While they may not have been outright People of the Machine, they certainly didn’t seem like People of the Earth either. They didn’t seem to have any anchored idea of what it means to be a person—as if that wasn’t even a question at all.

A formula for atheism?

A recent study involving over three million people globally suggests that societies with greater automation and AI tend to have lower levels of religious belief. The study included not just Christians but people of other faiths (e.g., Buddhists, Muslims, etc.). A clue that might explain this decline was suggested in one part of the research, which found that

learning about AI in an intensive day-long seminar increased people’s belief that technology has given humans superhuman abilities (i.e., to “play God” and “break laws of nature”). Attending this seminar also decreased the perceived importance of prayer and service attendance at work among highly religious people, but not less religious people.

Learning about AI was associated with even bigger declines in religiosity than learning about scientific advances. All this suggests that if you wanted to stop people from going to church and praying, don’t argue with them. Don’t bother with logic. Bombard them with enthusiastic messaging about the awesome power of AI and other miracles like Tesla Bots folding laundry, and then fill their lives with these technologies. Most won’t even realize what’s happening.

Take the study with a few grains of salt, as it’s correlational and doesn’t establish causation. Still, the study brings a vital issue into focus—an issue long recognized within Christianity and Judaism, and perhaps other spiritualities. Putting our trust in a divine power, whether God or a universal conscious energy, means recognizing, at some level, that our existence is dependent on this God or higher power. When we no longer believe that we’re dependent on a higher power, and instead transfer our sense of dependence onto machines, we also transfer our trust.

This isn’t just a trust in one particular thing in life, but a total trust; trust for our security, our deepest hopes, our yearning to live, thrive, love and be loved.

A Theophany of snow and ice

Theophany is one of the Great Feast days of the Orthodox Church. The word comes from the Greek theophania, meaning “appearance of God”, and refers to the baptism of Jesus at the Jordan River, when the Spirit of God descends on him like a dove, and a voice calls out of the heavens, “This is My beloved Son, in whom I am well pleased.”

Almost two weeks before the AI talk, we attended a Theophany service by a frozen pond. There were chanters, who stood on a snowy pathway, breath misting the air as they sang the service, and there were a few youths who held candles, an icon, a golden cross. A couple strolled past with steaming glass cups of boutique tea. They looked cool. They might have wandered out of a nearby café. We felt weird, as if we had wandered out of the Middle Ages. But we understood that the ceremony, though weird from the outside, was meant to direct us toward something beyond this world.

That “something” was within this world too. We knew it in the chanting, in the candle flames, in each other, in the snow, ice, water, in spoken words. Around these things and beyond these things.

According to custom, the priest threw a small cross into the pond. It punctured the ice that covered the surface, and he pulled it back on a string. Then he brought the cross back to us and each of us kissed it. It’s hard to imagine anything more symbolically trusting between a group of people in a post-pandemic world. This wasn’t just talking close or shaking hands. Our lips pressed against the same piece of wood, from toddler to octogenarian. And there it was again—around these things and beyond these things.

An Orthodox friend of mine recently said something that stuck with me: “Attention is the currency of worship”.5 The thing we most give our attention to is, by default, the thing we elevate as the highest and most important of our lives.

We have a tendency to look for things to represent what we conceive as the highest. Technology develops in the same direction. Laser printers print words on flat paper at high speed. 3-D printers can currently print three-dimensional objects like trinket jewelry and car parts, although one day they will print functioning human biological organs. Will there be a final stage, where we harness subatomic particles to print ideas into physical reality? Then we might truly be gods.

Big Tech is racing ahead to manufacture digital theophanies—machines of superhuman intelligence6 and functionality—through AI and embodied robots. It might be as simple as an AI entity built right into your phone that functions like a personal sidekick, like a Morgan Freeman in your pocket, offering sage reflections and witty advice, or it could be a silicone-skinned bot that becomes an intimate companion7 who never grows old or bored of your jokes. As one expert assures us,

It won’t be long—a year or two—before we have sophisticated, purpose-built companion bots designed for relationships, sex, intimacy and marriage. They’ll be popular. And they’ll make lots of money. Technologically, we’re already there. The capacity just has to be unlocked.

And this barely scratches the surface of the coming digital theophanies. Can we trust them? The makers of tech know that trust is key. The makers know that the competition is not ultimately about which company comes up with the next best thing, but a competition to soothe our vulnerability and excite our hopes.

Most of the trust they are trying to sell us addresses our worries and questions about whether AI will function properly at specific tasks, like Will this AI system keep my data secure? or Does AI-supported medicine result in better healthcare for under-served groups? But there are more fundamental issues, less talked about. We don’t know how AI works. Even the software engineers who develop AI don’t know how AI works. Can we trust a thing we will never understand? Can we trust a thing that is a representation of life, rather than life itself, to help us grow and thrive as human beings?

What about the experts, whose abstract blah-blah language leaves us feeling like they don’t understand our concerns, and who might see our spiritual talk as just a lot of woo-woo? Can we trust them? What about the makers themselves, who’ve proven to be geniuses at nudging and monetizing our thought processes?

“The great enemy of clear language is insincerity. When there is a gap between one’s real and one’s declared aims, one turns instinctively to long words and exhausted idioms.”

The inevitability narrative

There is absolutely no inevitability, so long as there is a willingness to contemplate what is happening.

-Marshall McLuhan

We are constantly being presented with an Inevitability Narrative about technology. The Inevitability Narrative tells us that tech just has to happen, there’s nothing we can do, stop being so old-fashioned, it will work out for the best, and therefore, like smartphones and social media before it, our societal attitude toward artificial intelligence systems and robots will once again be “anywhere, anything, anytime, for anyone”—including for our children.

We don’t need to accept the Inevitability Narrative. It is just a story designed to maximize profits. We can push back in our families, our schools, and wherever our voices can be heard, and demand a different attitude that says “only in certain places”, and “not everything”, and “it depends on your age”.

We must craft a different story about technology. A story we can trust. A story with far more nuance than “anywhere, anything, anytime, for anyone”. Being People of the Earth doesn’t mean being a bunch of cranky sourdough-kneading Luddites who warn our children never to talk to chatbots. It means being discerning about where we place our deepest trust.

“None of the modern machines, none of the modern paraphernalia. . . have any power except over the people who choose to use them.”

– Daily News, July 21, 1906 - G.K. Chesterton

There is no end of depth to the challenge of trust. Humans suffer from a bad case of bottomless vulnerability. It all begins at the beginning of life. If attention is the currency of worship, then touch is the prototype of all worship. The earliest trust that most humans experience is physical contact with a mother. Touch, trust, attention, love, a sense of worship—these are bound together in skin, warmth, flesh, breath, feeling. They remain bound throughout our lives. That is why the abuse of trust in relationships is so disturbing, and why the abuse of a child’s trust is most disturbing of all. Even if it’s never happened to us, we instinctively recognize the harm it causes at the deepest levels of what it means to be human.

At the very bottom of our vulnerability is the awareness of our mortality. We know that we will die. We know, yet we try to keep the knowledge out of sight, even while trying to make sense of it, as if we might overcome death. This is why People of the Machine are determined to be “all-knowing, immortal, and infinite” by fusing with technology. But even People of the Earth want to be all-knowing, immortal, and infinite, only not through wires, plastic, and cobalt. All of us experience the same awareness of mortality, and all of us yearn for a solution to the angst and suffering that comes with it.

We see mortality in Holbein’s 1533 painting of The Ambassadors. There—beneath the two elegant men, beneath the most advanced technologies of the day—is a skull. But it’s not always obvious, as the skull is distorted when viewed directly from the front of the painting, appearing as a slanted blur, like a strange object intruding from another dimension of space-time. The viewer needs to stand off to the right of the canvas to see the skull in proper proportion—as if Holbein8 is trying to say, maybe, that death is in the midst of life, yet we usually don’t see it clearly (which is probably a good thing).

And if you squint, you might pick out the Crucifix in the upper left corner, at the obscure fringes of the green drapery. A Christ in the shadows. A hidden theophany.

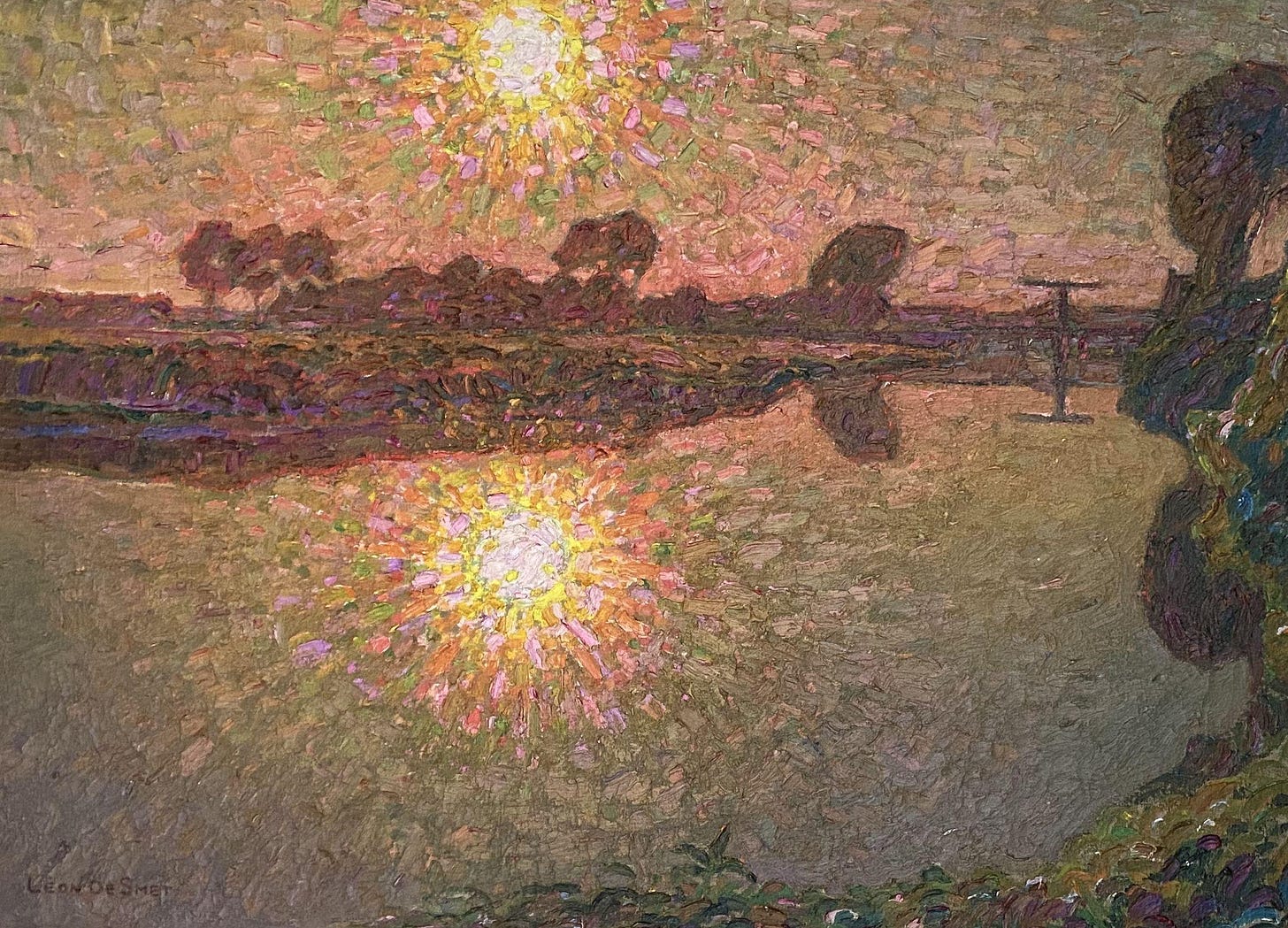

By chance, the Theophany service we happened to attend on that day of snow and ice occurred just outside the Perimeter Institute, before we knew that experts would soon be gathering inside its walls to talk about AI. We snapped the photo below as the procession passed the building and its golden windows. A golden cross, golden windows. Two kinds of gold, it seemed. Faith and rationalism. Tradition and technology. People of the Earth and People of the Machine.

These contrasts are often presented to us in competitive terms—faith versus rationality, tradition versus technology. As if these ways of understanding the world are mutually exclusive. But, as Chesterton reminds us, “neither reason nor faith will ever die; for men would die if deprived of either.”

We need both faith and reason, without reducing one to the other. They are complementary ways of experiencing the world.9 If these two ways of seeing don’t always fit together, if they feel like two incompatible lenses in a pair of glasses, or like incongruent objects in a painting, the limitation is not in the lenses or the objects, but in the viewer. We do not see wholly.

As AI begins to saturate our lives in the same way that the internet and devices have, many of us will want to reject it, out of fear of what it will do to ourselves and our children. That fear may well be justified. But the deeper choice can never be between faith or rationalism, tradition or technology. The choice is between placing our deepest trust in something that encompasses the whole of reality, versus something that does not.

Finding that all-encompassing something that we can trust is the hardest work of life. The striving for which we were made. Even when we find it, even when we think we glimpse that theophany in the shadows, or in the holy ritual, each treasure must be tested, every piece of gold bitten to see if it’s real—or a thing made for fools.

What is your response to the question “Can we trust AI?”

What questions do you think need to be asked with regard to AI?

What actions can we take to resist AI harvesting our language, images, art, etc.?

Please share your thoughts in the comments section!

If you found this post helpful (or hopeful), and if you would like to support our work of putting together a book on “The Making of UnMachine Minds”, please consider supporting our work by becoming a paid subscriber, or simply show your appreciation with a like, restack, or share.

Further Reading

Synthetic Selves: the Ethics of Real Time AI Avatars by

The Myth of Technological Inevitability by

The Cultural, Psychological and Collective Impacts of Generative AI by

andBeguiling baby tech, bewildering bodies by

Not Making Romance Better, Just Making Dating Worse - on inconvenient conveniences by

You Don’t Need to Document Everything - Stop selling your life off so cheaply to strangers by

Southern Hospitality in the New Machine Age (Front Porch Republic) by Alex Taylor

To see an example of deep fake AI language capabilities take a look at this clip of Ethan Mollick speaking multiple languages. Thanks to

for this link!The tickets were free to the public, but were snatched up within the first two minutes they became available online. A big ‘thank you’ to our friend who secured us some complimentary tickets :)

Elon Musk announced that the first human received an implant from Neuralink on Jan.28. Neuralink states on its website that its mission is to “Create a generalized brain interface to restore autonomy to those with unmet medical needs today and unlock human potential tomorrow.”

Read this article from

to get an inside look at what can unfold when we expect and AI boyfriend to “love” us: What My AI Boyfriend Taught Me About Love - I created a monster, and it was me.Holbein also famously painted The Body of the Dead Christ in the Tomb (which you can see at the Kunstmuseum in Ruth’s hometown Basel). It is said that Dostoevsky’s wife had to drag him away from the life-like depiction “lest its grip on him induce an epileptic seizure”. Apparently, Dostoevsky saw in Holbein “an impulse similar to one of his own main literary preoccupations: the pious desire to confront Christian faith with everything that negated it, in this case the laws of nature and the stark reality of death.”

Thanks to our friend

for his insights on “competition” versus “complementarity”, which he also explores in his concise book How Do You Know That?